The world of IT has changed forever. It wasn't long ago that Microsoft's cloud-based email service was seen as unsuitable for large corporations. Then Microsoft started signing up companies such as Lowes to move their email to the cloud. Suddenly, the CIOs who still wanted to run their own exchange server looked woefully out of touch. Fast forward to today and Microsoft's cloud email Office365 serves over 120 million business users. The security and latency concerns have been addressed and it is business as usual.

And that was just the beginning. More applications and verticals followed. ERP, Databases, Analytics - and now High Performance Computing.

We now live in a world where 80% of enterprises are adopting a Cloud 1st Policy marking an inflection point in the evolution of enterprise cloud.

As with any change of this magnitude, there will be winners and losers. And just like any change, the winners will be the ones who adapt to the new world. Because the rewards for adapting are huge.

You need a scalable, secure, cost-effective way to run your engineering simulations - so you picked Microsoft's Cloud Platform: Azure. Azure HPC has become the de-facto choice for High Performance Computing in the cloud. But setting up your simulations on Azure requires more than simply signing an Enterprise Agreement. You need to carefully plan your approach, deploy the right technology components and resources, and put the correct measures in place so you can reap the huge benefits to come.

Cloud computing is a modern option that complements your workstation and traditional on-premises datacenter. The cloud vendor, in this case Microsoft, takes over the responsibility for hardware purchase and maintenance and provides a wide variety of platform services that you can use. You lease the hardware and software services you need on an as-needed basis. This has the effect of converting your capital expenses into operational expenses. It also allows you to lease access to specialized hardware and software resources that would be too expensive to purchase.

As an engineering organization, you need to decide exactly what you think you're moving to the cloud. If the answer is "my Ansys simulations" then understand that this involves a lot more than just hardware and Azure VMs.

You need to get clear on what exactly you're moving to the Cloud

For example, you need to map out your department's engineering applications and understand the dependencies — how they work with each other and depend on each other. You need to have an idea of what kind of data will move between the various engineering applications you use. You want to understand if these engineer applications depend upon on-premise IT services .

If you move your AnsysTM workflow to Azure but keep your post processing systems in your data center, then you may end up increasing your network traffic, slowing your process - all while paying more!

Microsoft Azure is a cloud platform you can use to build, deploy, and manage solutions for a wide range of uses.

Azure is an open and flexible cloud platform that enables you to quickly build, deploy, and manage applications across a global network of Microsoft-managed datacenters. You can build applications using any language, tool, or framework. And you can integrate your public cloud applications with your existing IT environment.

Figure 1: Azure Offers a Dizzying Array of Services

Azure offers a dizzying array of services, and continues to add services at a blinding pace. In fact, this alone should be a good reason for enterprise IT teams to stop viewing Azure as competition and instead view it as another powerful tool in their arsenal to help their customers.

To illustrate the breadth of Azure for HPC, lets look at two specific services.

Azure IaaS

The first service is Azure Infrastructure-as-a-Service. This is the classic DIY or Do-It-Yourself approach where instead of buying hardware from a vendor, you lease VMs from Azure. This is called Infrastructure as a Service or IaaS. Customers can then deploy their own middleware and end-user software to run their HPC workloads on Azure. IaaS is an efficient way to get infrastructure on demand. The scalability and elasticity it provides are very desirable cloud features.

There are some limitations with this do-it-yourself approach. First: running HPC workloads on Azure is not a simple, one-time activity. There are many moving parts and multiple stakeholders including the engineer, IT, software vendor, etc. In addition, you need the software itself in addition to infrastructure.

At the other end of the spectrum is the Azure Marketplace. This is a Software-as-a-Service solution that gives customers a complete, self-service user experience. Even non-HPC users can easily launch entry level HPC services. These services automatically deploy many popular engineering applications to Azure instances, leading to an easy to use, supported, zero maintenance, user experience for engineers.

Azure offers a service for every need. The key is knowing exactly what your user's pain points are and applying the right tool.

Now that we know a bit more about Azure, let's review why engineering organizations should use it at all. This includes a number of obvious and some not-so-obvious benefits.

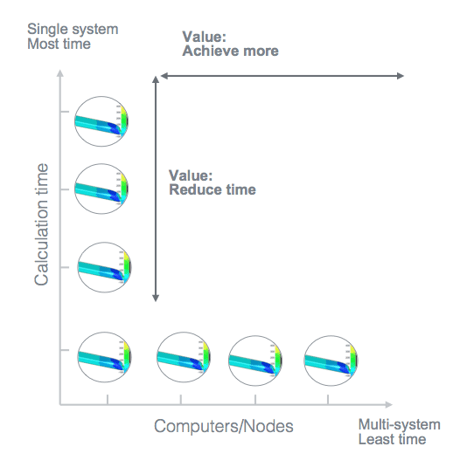

Figure 2: Advantages of parallel computing

Azure enables you to take advantage of parallel computing - something that your engineering software such as ANSYS is already optimized for. Parallel computing lets you do more and more in less and less time.

In addition, Microsoft partners with specialized HPC service providers to expand the value proposition further:

Microsoft has invested billions of dollars in a modern hyperscale infrastructure. The Azure platform is highly differentiated in two major infrastructure components that are required for HPC:

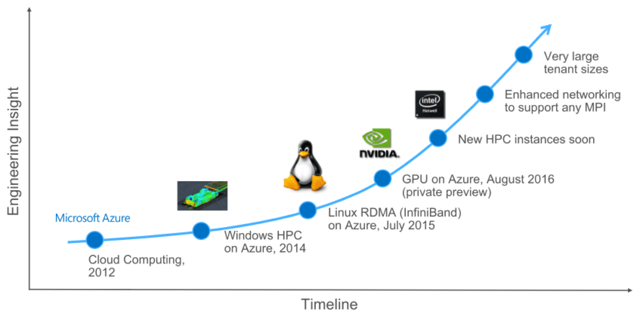

Figure 3: Evolution of HPC on Azure

Azure offers engineering workloads a distinct advantage and it is a good time for R&D organizations to exploit that advantage.

Microsoft also has a strong advantage in the context of enterprise engagements. Since HPC workloads carry strategic importance for enterprises, this type of workload requires the capabilities of a focused enterprise vendor team. If a Microsoft Enterprise Agreement is in place which includes Microsoft products, services and cloud, this is a great step towards a successful HPC implementation on Azure.

Now that you are convinced that Azure can deliver incredible benefits to your engineering workflow, it is time to set up your first project. After all, this is a new technology that needs to integrate into your own business processes. On the other hand, if you don't really need it to be integrated into your processes, you can simply use a self-service option that's available on the Azure Marketplace. But if you want to incorporate Azure as a tool in your engineering tool chain, then you need a methodical approach to your implementation.

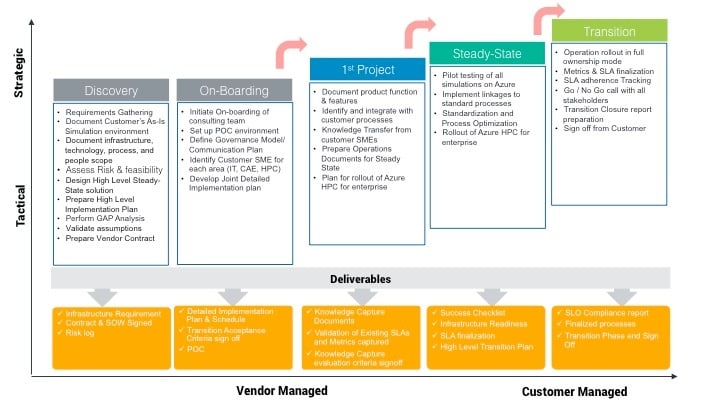

Fortunately, there are some common best practices you can employ to make sure you have covered all the bases. Figure 4 shows a sequence of steps that an organization needs to take including the important considerations, both technical and organizational, that must be tackled for a successful implementation.

Figure 4 - Deployment Strategy for Simulations on Azure

Preparation

Before you begin, it's a good idea to document your in-house infrastructure so you know what you're dealing with. Establishing this as-is state can be very helpful down the road. Here are some example questions you should consider:

Getting these collated and documented gives you a good baseline to start.

Meet with your Microsoft AE and Partner Team

The next step should be to meet with the team that will be responsible for delivering the project. Ensure that you bring a representative from the Engineering organization (a user of HPC) to attend the meeting.

It is a good idea to run a PoC to test your own codes and models on Azure as you get ready to move into more widespread implementation.

Once you are successful with your first project on Azure, you will gain the confidence to start integrating Azure into your regular R&D workflow.

As you start integrating Azure into your R&D workflow, more stakeholders from IT, Procurement, and Legal need to get involved. It is a good idea to document what the shared responsibilities will be between you, Microsoft and the Microsoft partner who is helping you.

Here are some areas that you might want to consider as you take this next step:

At this stage it is critical that you get rigorous with your project planning. With so many stakeholders it is easy to get lost in who is doing what. One good way to document this is to agree on a set of assumptions. Here is an example of some common ones:

Data security is critical to organizations, regardless of whether that data is in the cloud or not. You want to know, "Is my engineering data secure in the cloud?". You want to work with a partner that maintains the highest standards of security to protect your privacy and your data. You need to validate that the partner enforces strict internal product controls, and regularly audits its policies and procedures.

The primary areas of security include: Physical Security, System Security, Operational Security, Application and Data Security.

The security protocols you want to pay attention to are:

Engineering data is of strategic importance. Protection of this data from accidental deletion and unauthorized change or disclosure is a key part of any cloud implementation.

Microsoft has been leading the industry in establishing clear security and privacy requirements for cloud computing and then consistently meeting these requirements.

Microsoft datacenters are protected by layers of defense-in-depth security that include a variety of physical safeguards. Microsoft's Cyber Defense Operations Center (CDOC) are manned by security response experts to help protect, detect, and respond 24/7 to security threats in real time.

Azure Storage offers a set of security features that help secure your storage account by using Role - Based Access Control (RBAC) and Microsoft Azure Active Directory (Azure AD). Azure also offers Storage Service Encryption, which will encrypt data written to the storage account.

Finally, Azure meets a broad set of international and industry-specific compliance standards, such as ISO 27001, HIPAA, FedRAMP, SOC 1 and SOC 2, as well as country-specific standards like Australia IRAP, UK G-Cloud, and Singapore MTCS. Rigorous third-party audits, such as by the British Standards Institute, verify Azure’s adherence to the strict security controls these standards mandate.

Figure 5 - Azure has the Widest Certifications and Coverage

For more details please read the UberCloud Azure Security White Paper

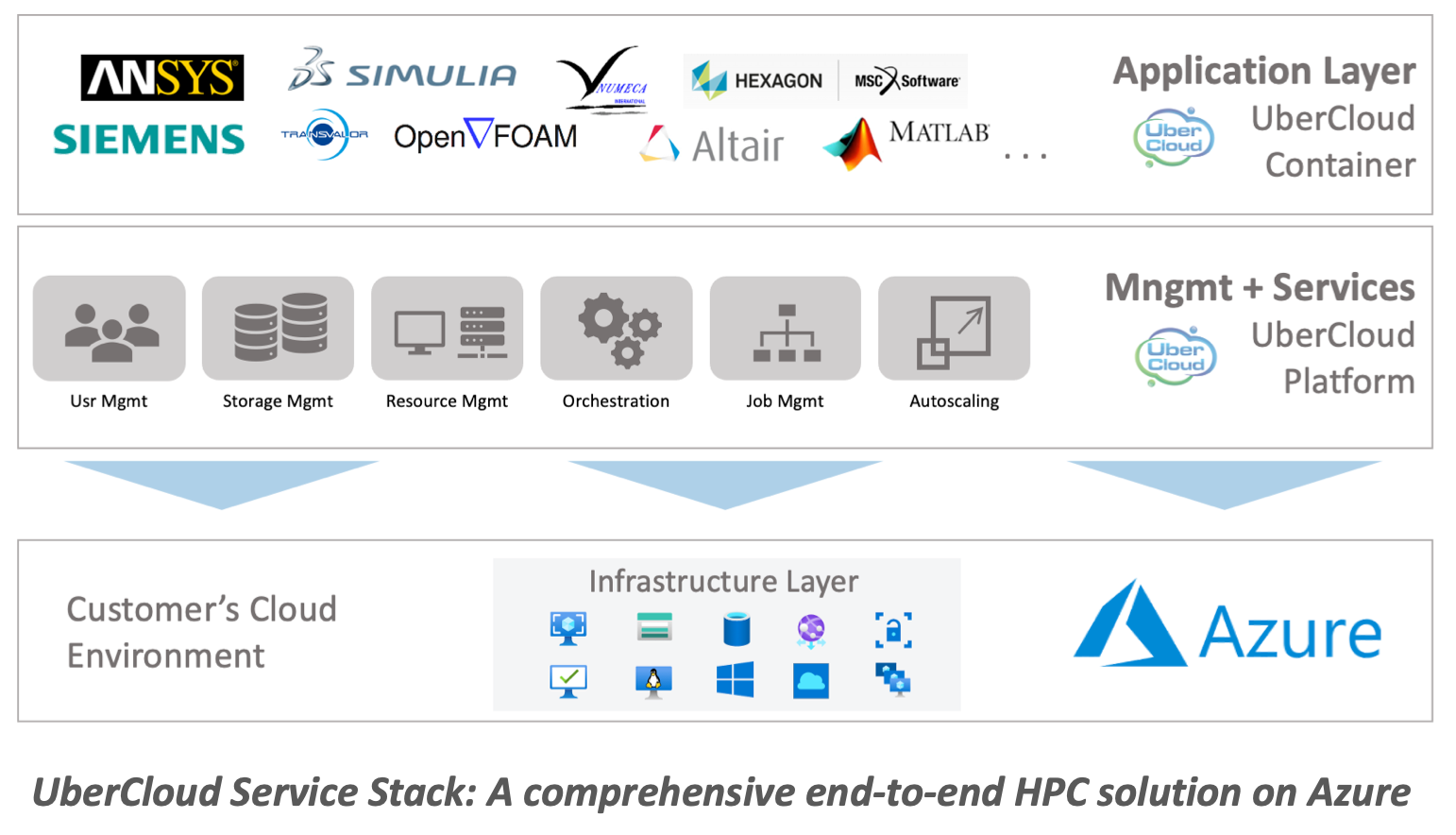

Computer Aided Engineering applications can scale to thousands of compute cores in the cloud. They can also be used to extend on-premises clusters. The UberCloud solution includes all the HPC capabilities you need including job scheduling, auto-scaling of compute resources, and more.

“Getting productive on Azure doesn't have to be hard. You just need the right combination of skills, tools and processes.”

Getting started with a complex FEA or CFD application on Azure can be daunting. Fortunately, this has been done before and there are ways to make this process fast, low-risk and inexpensive. Here’s what you need to remember.

Working with a partner can be helpful as you start down this journey. But you should insist that the partner show you how they can help you with the following: